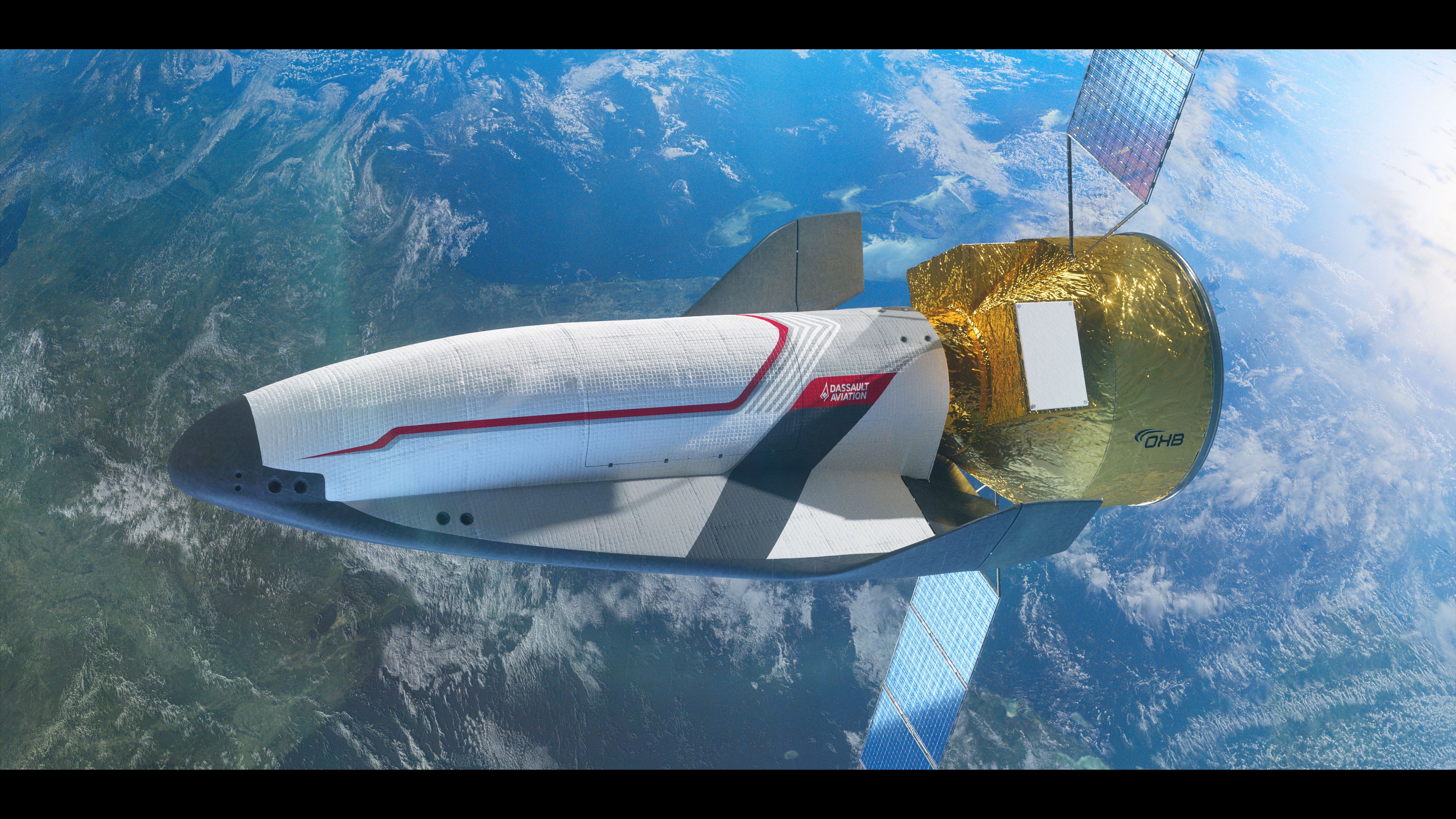

Les progrès technologiques peuvent permettre le développement de robots armés utilisant les ressources de l’IA pour un processus décisionnel dans l’emploi opérationnel. Il y a donc des impératifs, notamment en termes de droit international humanitaire pour que des règles précises soient mises en oeuvre, dont la place de l’homme dans l’ouverture du feu.

Algortihms delegated with life and death decisions

Algorithms Delegated with Life and Death Decisions

Technological progress can lead to the development of armed robots that depend on AI for their decision-making process in operational use. It is therefore imperative to have precise rules put into effect, which include the position of the human in the decision to open fire, and which respect international humanitarian law.

There is an ongoing technological transformation of warfare with ever more control of weapons being ceded to computer systems. The superpowers and other states are embarked on the development of weapons systems that once activated can select targets, track them and apply violent force without human supervision. These Autonomous Weapons Systems (AWS) are seen as a way to create a military edge. China, Russian, Israel and the US wish to use them for force multiplication with very few human controllers operating swarms of weapons in the air, on the land as well as on and under the sea.

This has led to considerable and increasing international discussion among nation states and civil society that has highlighted serious concerns about delegating the decision to apply violent force. Discussions have been ongoing at the Convention for Certain Conventional Weapons at the UN in Geneva since 2013 and are making headway towards regulations to ensure the meaningful human control of weapons systems.

This article will summarize three main clusters of arguments against the use of AWS: Compliance with IHL, moral issues and the destabilization of global security.

Il reste 92 % de l'article à lire

Plan de l'article

.jpg)